Mar 29, 2026

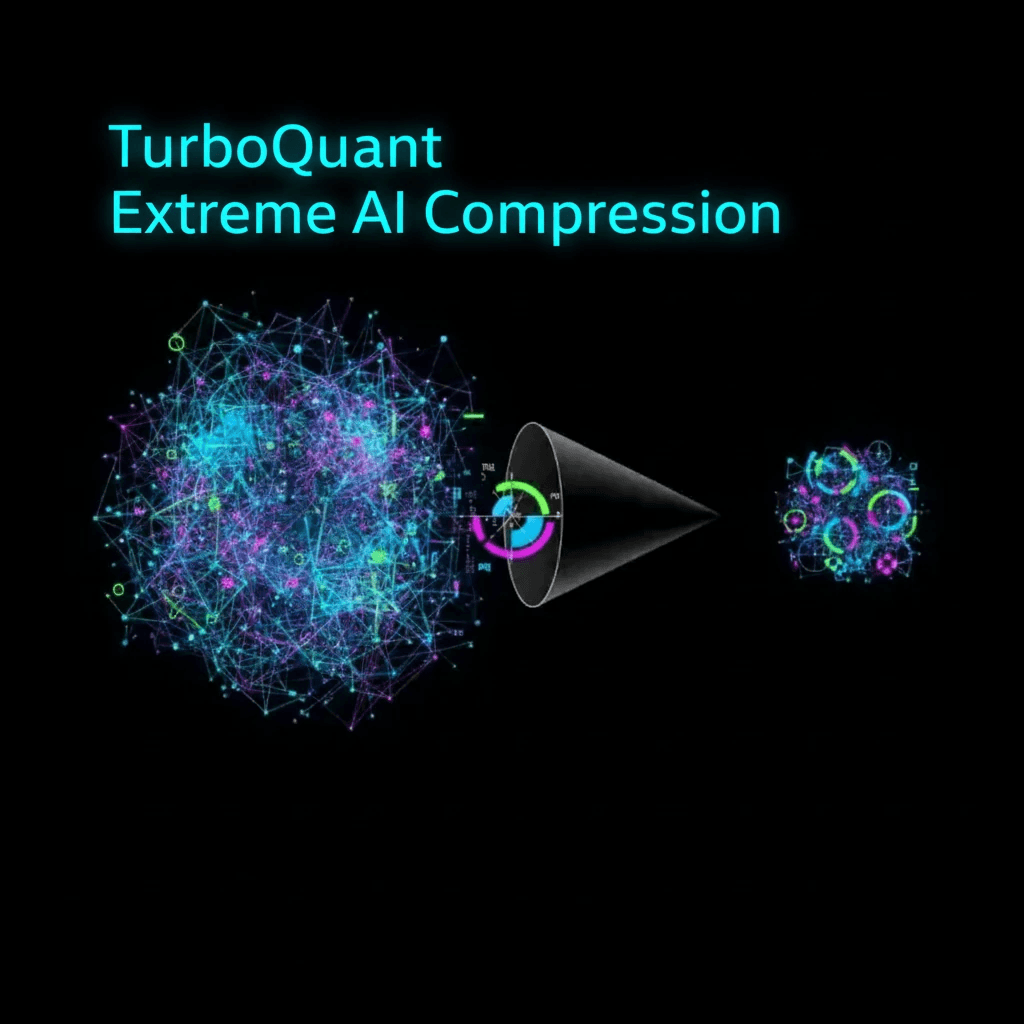

TurboQuant: Google’s New Algorithm Promises Extreme AI Compression Without Performance Loss

Google DeepMind’s TurboQuant combines Quantized Johnson–Lindenstrauss with a new PolarQuant technique to compress high‑dimensional vectors more efficiently. The approach removes extra normalization and constant storage overhead, potentially reducing memory costs for large language models and vector search systems while maintaining performance.

TurboQuant, a new compression algorithm from Google DeepMind, promises to dramatically cut AI memory usage without hurting model quality. Detailed in a recent technical report, TurboQuant tackles a long‑standing problem in vector quantization: traditional methods compress high‑dimensional vectors used in key‑value caches and vector search, but introduce extra memory overhead for storing quantization constants, partly negating the benefits.

TurboQuant combines Quantized Johnson–Lindenstrauss (QJL) with a novel technique called PolarQuant. PolarQuant transforms vectors from standard Cartesian coordinates into polar coordinates (radius and angles), exploiting predictable angle patterns to remove the need for expensive normalization and per‑block constants. In testing, TurboQuant, QJL, and PolarQuant showed strong potential to relieve key‑value cache bottlenecks while maintaining AI performance.

This work could enable more efficient large‑scale language models and vector search systems by significantly reducing memory costs.

Reference: Google Research